Computational biology is rapidly evolving with the advent of new technologies, especially in the way we collect, analyze and visualize data. Dr. Ragothaman Yennmalli, a Kolabtree freelancer and scientist, examines four promising advances.

Following up on the previous introductory post, here I will highlight some of the recent trends or recent advances in the biological sciences that are transforming computational biology. These advances rely heavily on computational tools and methods — big data analysis, multiscale modelling, etc. Some of them are listed below.

1. Big Data

This is a well known term in computer science and has been picked by biologists only recently. Thanks to the next generation sequencing techniques, the sequence of a genome can be obtained in relatively shorter time. For example, the relevance of generating data quickly is magnified when working with metagenomic data or a microbiome. How can one manage the data? What about storage for long term? What are the tools for analyzing such massive data? These questions arise and they do have answers. As mentioned this is a recent trend in biology but not in computer science or experimental physics, where handling and analyzing big data is a routine job.

One particular instance where research is happening is in the file formats of biological big data. In the case of protein structure file format, the current standard is the .pdb format, a column dependent format that is parseable and both human and machine readable. However, this format fails when describing mega structures, such as the ribosome or full viral capsids. Hence, a new format has been proposed called the .pdbx format that overcomes the previous format’s limitations. There is also another format called MMTF format that spees up the loading time for structures with more then 20 million atoms within seconds.

Further reading on big data in computational structural biology:

http://science.sciencemag.org/content/355/6322/248

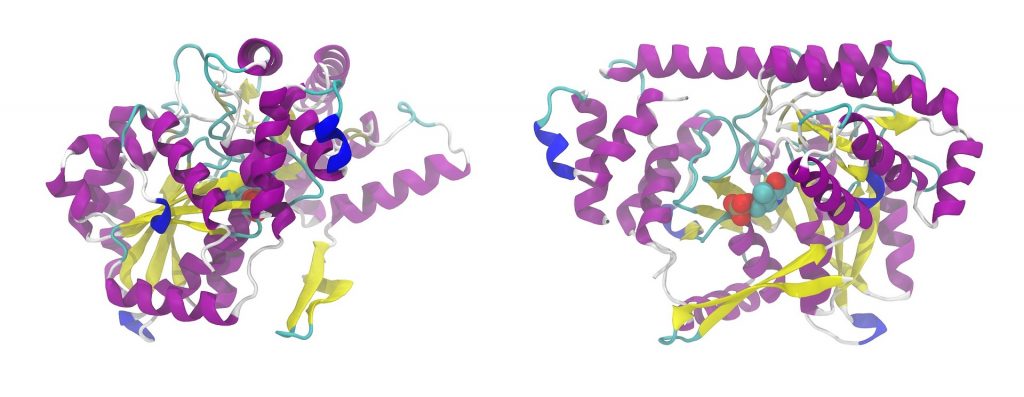

2. Cryo-EM and XFEL techniques

These two methods are not new, as such. However, the current technology and advances happening in these two fields are pushing the limits of analyzing biomolecular structure. Cryo-EM is a technique to capture the three dimensional structure of the biomolecule using electron microscope at high resolution. In one of the pioneering labs of NIH, a 2.5Å structure was solved. This resolution is usually obtained with crystal structure of proteins, which routinely involves at least 1-2 months of time to standardize the optimal crystal to be shot under x-ray beam.

In contrast, a recent technique that is revolutionizing structural biology is XFEL method, which involves shooting high intensity x-ray beams on microcrystals of proteins. Due to the high radiation the microcrystals are literally burned to get the data. Tens of thousands of microcrystals are required to get a data of decent coverage. Each image captured from a microcrystal has to be analyzed with the rest to get the 3D structure of the biomolecule.

Such techniques depend heavily on automated software that use image processing algorithms and to some extent machine learning approaches to identify the signal from the surrounding noise. This is big data, since the diversity and velocity at which information is acquired is astronomical.

3. Multiscale modelling

Unlike single biomolecular structure modelling and extrapolating to a more complex system, multiscale modelling involves more than 200,000 atoms and the dynamics obtained reveal long time range interactions and complex behavior of the multiple components (either homogeneous or heterogenous). The data generated from such experiments are massive due to the number of data points obtained, also due to multiple runs to get a statistical significance.

One instance where multiscale modelling has been used is in understanding the dyanmics of Cellulosome, a bacterial complex structure made of heterogenous proteins and enzymes that attach to cellulose. Cellulosome are industrially important in the biofuel area, specifically bioethanol production.

Further reading: http://www.ks.uiuc.edu/Research/biofuels/

4. Single cell sequencing

Instead of looking at multiple cells the latest technique is to isolate each individual cell and extract the RNA and sequence them. This recent technique is called single cell RNA sequencing or scRNA-seq. In this Nature article, discussing the method and its advantages, they mention that

It’s much more difficult to manipulate individual cells than large populations, and because each cell yields only a tiny amount of RNA, there’s no room for error. Another problem is analysing the enormous amounts of data that result — not least because the tools used can be unintuitive.

An excellent review of the workflow and tools for scRNA-seq is given here: https://doi.org/10.3389/fgene.2016.00163

Need help with consulting from Computational biologist? Hire a freelance Computational Biology expert on Kolabtree. It’s free to post your project and get quotes.

Want to consult Dr. Yennamalli on a project? Get in touch with him on Kolabtree here.

Related Experts:

Hire a Bioinformatician Hire a Molecular Biologist Hire a Biostatistician